Sense of Embodiment in Virtual Reality, Spatial-Temporal Modulation of Human Body Expressions

Utilizing computer technology, Sony CSL researcher Shunichi Kasahara is building a research and engineering framework called “Superception” (super+perception) with the intention of transforming and expanding human perception and recognition.

To showcase Superception, Kasahara has been working together with YCAM (Yamaguchi Center for Arts and Media) to research on human embodiment by making use of virtual body expressions via computer graphics.

Superception of embodiment

The feeling of body ownership — that “this is my body” — enables a malleable adaptation to an environment or tool while expanding physical sensation. Elicitation of this feeling with other than the body itself is called the body ownership illusion. A common example is the “rubber hand illusion.” By the simultaneous stimulation of visual and tactile, an imitation (rubber) hand is perceived as one’s own hand. Similarly, it is possible to have body ownership with computer generated body expressions in immersive virtual reality space.

Our focus is on making the sense of body malleable. With spatial-temporal modulation of movements that define one’s physical expressions in virtual reality space, our system will create changes in the sense of embodiment, including awareness of body weight variations.

Malleable Embodiment System

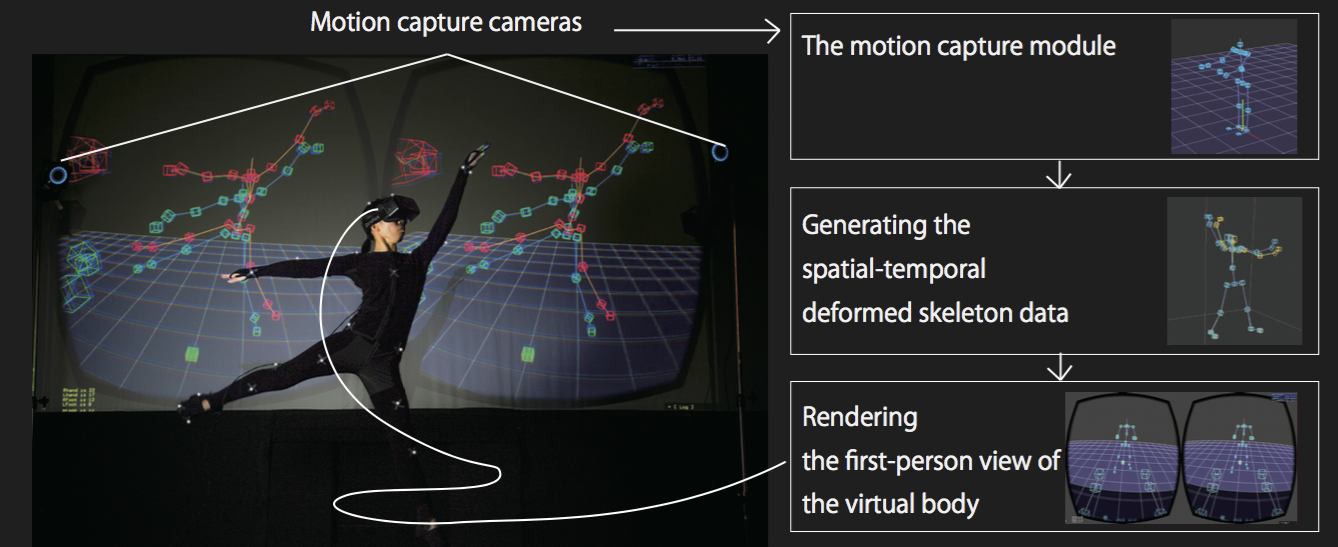

We developed a system that captures full-body movement and generates estimated past and future body movement by deformation. This system utilizes motion-capture that measures real-time body movements. From sequences of human movement data, it then estimates virtual body movements at past and future moments in time. With a head mounted display, people could see their bodies as slightly deformed. Our results show that spatial-temporal deformation of a virtual body actually changes the sense of body although the body itself doesn’t change.

We conducted a workshop study with a range of participants including those who professionally use their bodies such as professional dancers. As a general tendency, the deformation toward the near future induced lighter, nimble, and vitalized sensations. In contrast, the deformation toward the near past induced heavier, dull, and diminished sensations in motion. On the other hands, the larger deformation made participants distinguish the discrepancy between their body and the virtual body, then induced no change of internal body.

### Possibility of Computational engineering for sense of body

The research results provide exciting new possibilities of computational control of how a person’s body is perceived, along with associated impacts on feelings and emotions. Our results suggest the design of a system intended to maintain the sense of body ownership during virtual body expressions. Control of these unconscious sensations is expected to find application in the rehabilitation, full-body dance creation and in the process of transforming physical-sensation for sporting activities.

Publication and talks

Shunichi Kasahara, Keina Konno, Richi Owaki, Tsubasa Nishi, Akiko Takeshita, Takayuki Ito, Shoko Kasuga, and Junichi Ushiba. 2017. Malleable Embodiment: Changing Sense of Embodiment by Spatial-Temporal Deformation of Virtual Human Body. In Proceedings of the 2017 CHI Conference on Human Factors in Computing Systems (CHI ’17). ACM, New York, NY, USA, 6438-6448. DOI:https://doi.org/10.1145/3025453.3025962