Whose touch is this? Understanding the Agency Trade-off Between User-driven touch vs. Computer-driven Touch

ACM Transactions on Computer-Human Interaction (full paper)

Daisuke Tajima, Jun Nishida, Pedro Lopes, and Shunichi Kasahara.

Force-feedback interfaces actuate the user’s to touch involuntarily (using exoskeletons or electrical muscle stimulation); we refer to this as computer-driven touch. Unfortunately, forcing users to touch causes a loss of their sense of agency. While we found that delaying the timing of computer-driven touch preserves agency, they only considered the naive case when user-driven touch is aligned with computer-driven touch. We argue this is unlikely as it assumes we can perfectly predict user-touches. But, what about all the remainder situations: when the haptics forces the user into an outcome they did not intend or assists the user in an outcome they would not achieve alone? We unveil, via an experiment, what happens in these novel situations. From our findings, we synthesize a framework that enables researchers of digital-touch systems to trade-off between haptic-assistance vs. sense-of-agency.

Tajima, Daisuke, Jun Nishida, Pedro Lopes, and Shunichi Kasahara. 2022. “Whose Touch Is This?: Understanding the Agency Trade-Off Between User-Driven Touch vs. Computer-Driven Touch.” ACM Trans. Comput.-Hum. Interact., 24, 29 (3): 1–27.

https://dl.acm.org/doi/abs/10.1145/3489608

This is a collaborative research project with Sony CSL, Superception Group and University of Chicago, Human Computer Integration Lab

When you and a computer both are driving your body in Human-Computer Integration. How do we feel and attribute the body acts as our own action? This is a lasting question from our previous project (Preemptive Action, ACM CHI 2019). We explored more complex situations.

We designed a cognitive demanding choice task, you have to respond with two hands, and also adding electrical muscle stimulation (EMS) that can generate computer-driven touches. This means, you will respond to it, additionally, the computer also responds via your hands.

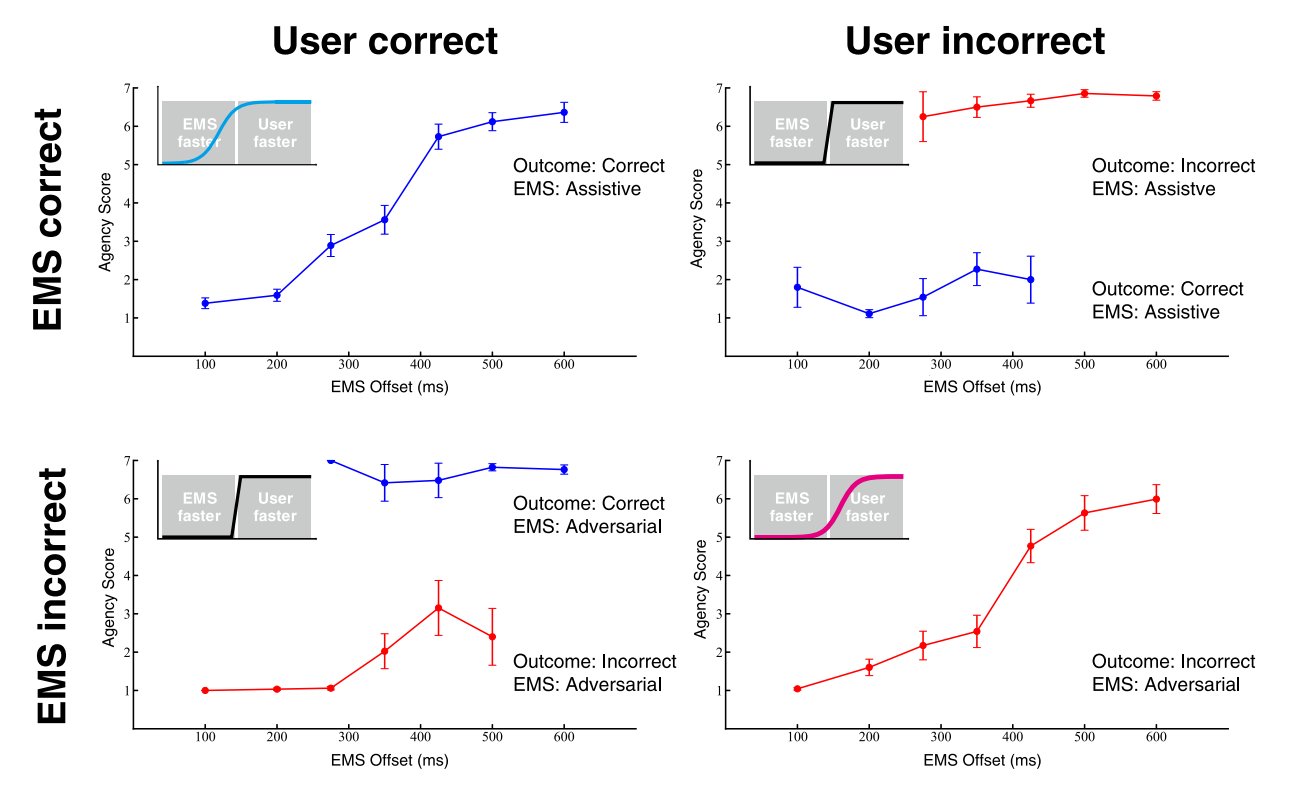

Our experiment makes interesting quadrants of computer-driven and user-driven touch, 1) joint success, 2)forced failure, 3) forced success and 4) joint failure. We investigated how user reports the sense of agency with various timing EMS in the 4 quadrants.

We found different agency (feeling that self-control) -profiles depending on whether the user-driven touch or the computer-driven touch is congruent or not; the timing of the computer-driven touch influences the sense of agency, but also the outcomes affect agency too. From those insights, we synthesized a quadrants framework for digital-touch systems to trade-off between haptic-assistance vs. sense-of-agency, in Human-Computer Integration.